Join 40,000+ sales and marketing pros who receive our weekly newsletter.

Get the most relevant, actionable digital sales and marketing insights you need to make smarter decisions faster... all in under five minutes.

Facebook is continuing to make moves to strengthen its platform in an effort to build trust with its users and advertisers.

In just 2019, they’ve made great progress in fighting misinformation and boosting the authority of content posted on the platform.

This week, they posted a slew of announcements to their Newsroom blog regarding the steps they’ve taken, and what they’re planning to do to moving forward to limit the spread of “fake news,” hate speech, and other content that violates Facebook’s Community Standards.

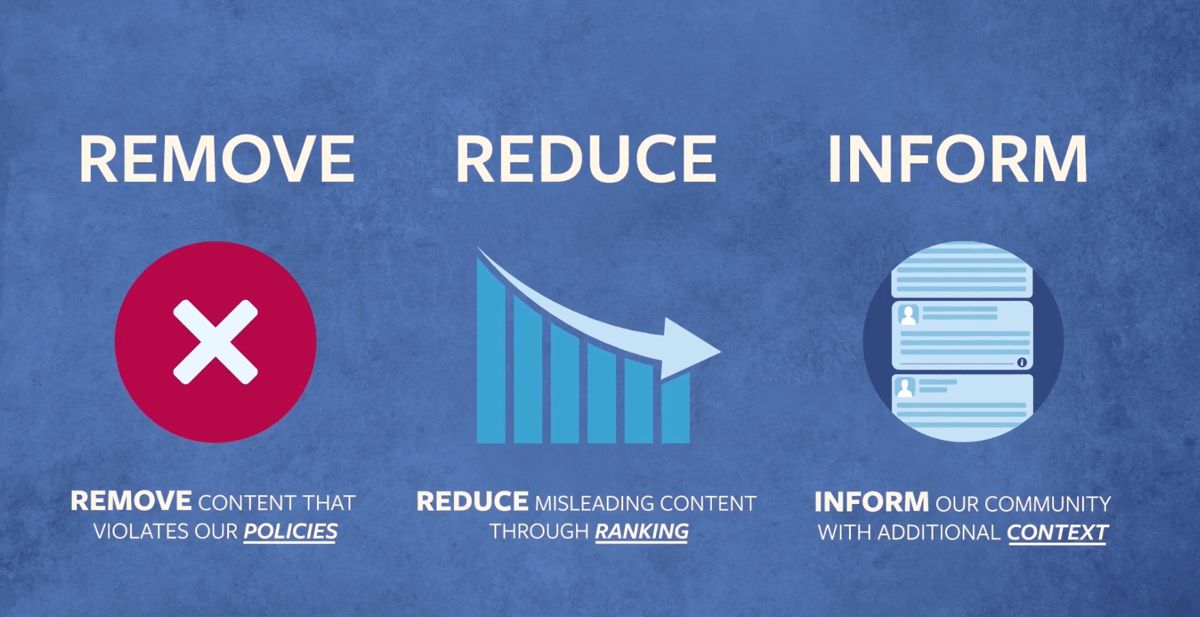

Essentially, Facebook is planning to build upon its “Remove, Reduce, Inform” process first announced in 2016.

To understand the basics of the 3-step plan, here’s a short video Facebook created upon the initial announcement:

Here’s a breakdown of how Facebook hopes to expand upon this process to strengthen their ability to control misleading or harmful content on the platform:

Removing Harmful Content

While Facebook has many measures in place to prevent content that violates its Community Standards from being posted, some can still slip through the cracks.

When that happens, the goal is to catch and remove it as quickly as possible - but doing so has presented challenges.

This problem persists most frequently in closed Facebook Groups. Facebook does have systems in place that will catch around 95% of these infractions, but found its team needs to be doing more to hold Groups accountable for upholding Facebook’s community standards.

In response, they have put two new standards in place.

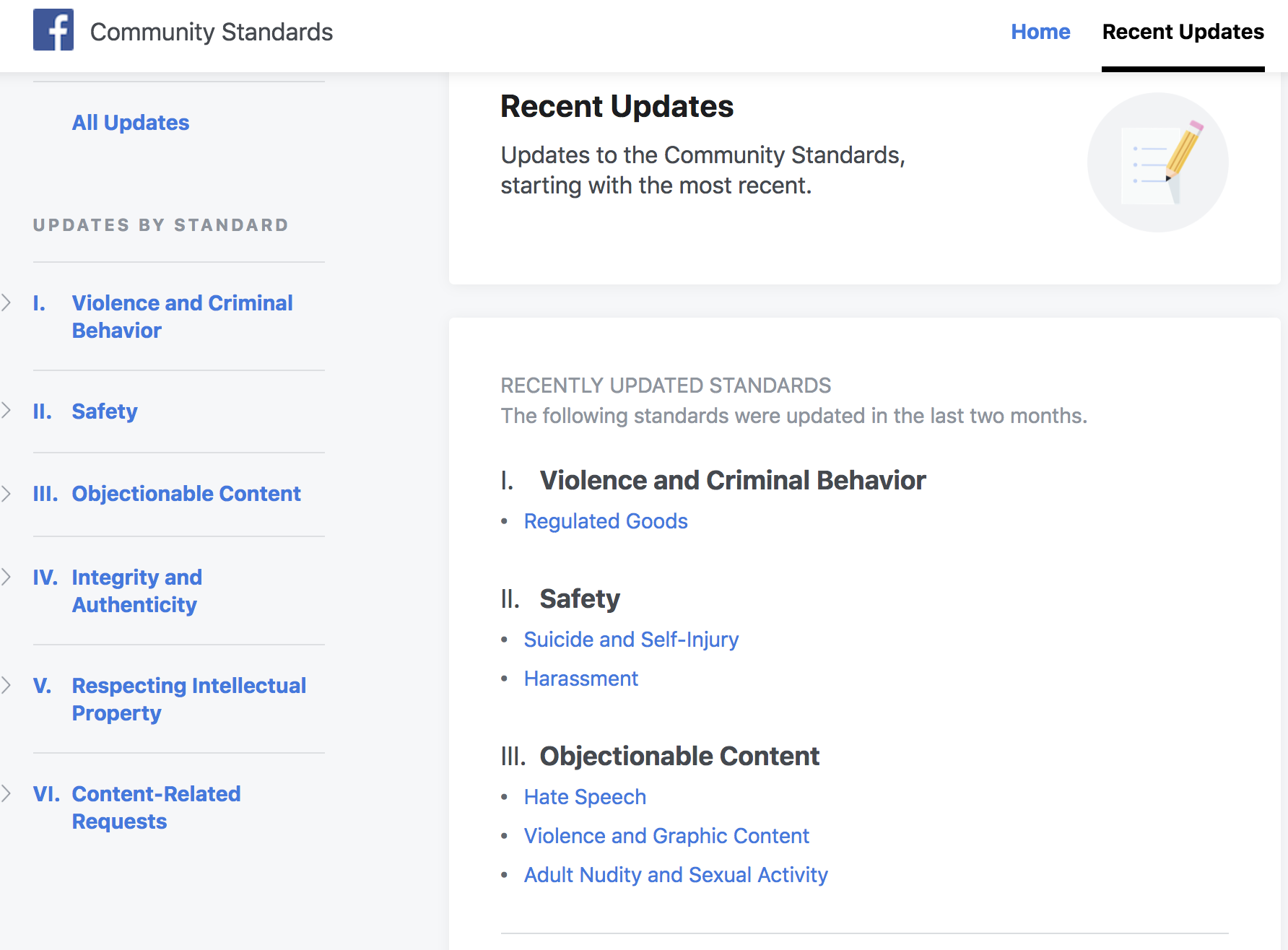

First, they added a “Recent Updates” tab to their Community Standards site. This tab will show any standards that have been added or updated in the last two months.

Because Facebook’s terms can change so frequently, it's important that users have access to up to date information on what is considered a violation.

To boost compliance, Facebook is holding group admins more accountable for violations of Community Standards.

“Starting in the coming weeks, when reviewing a group to decide whether or not to take it down, we will look at admin and moderator content violations in that group, including member posts they have approved, as a stronger signal that the group violates our standards.”

Additionally, Facebook is introducing a new tool called Group Quality to increase transparency surrounding what it considers to be a “violation” of it’s Community Standards and when they enforce it.

The feature will show a historical overview of content that has been removed or flagged for violations, and will show a section for any false news posted in the group. It’s currently unclear if only group admins can see this feature, or if all group members are able to view it.

Reducing Misleading Content

This section refers to content that is problematic or annoying, like clickbait, spam, or fake news - but doesn’t necessarily violate Facebook’s Community Guidelines.

For example, you could share a blog article on “scientific research” on why the Earth is flat, and even if the content isn’t factual, it doesn’t violate Facebook’s standards.

This “grey” area between what’s just misleading and what’s truly harmful is why Facebook has had such a hard time limiting the spread of misinformation on its platform.

While False News is referenced in the Community Guidelines, it also explains why this has been such a struggle for the platform, and how Facebook approaches the issue:

“Reducing the spread of false news on Facebook is a responsibility that we take seriously. We also recognize that this is a challenging and sensitive issue. We want to help people stay informed without stifling productive public discourse. There is also a fine line between false news and satire or opinion. For these reasons, we don't remove false news from Facebook but instead, significantly reduce its distribution by showing it lower in the News Feed.”

Contrary to popular belief, the right to Free Speech doesn’t apply to private companies like Facebook (I didn’t realize that needed to be said until I saw Twitter’s response to this news).

So while Facebook wants to allow all users to express themselves on the platform, they are well within their rights to put additional guidelines in place to enhance the content posted to the platform.

To expand on these efforts, they’ve set the following new standards in place:

- Collaborating with outside experts to boost fact-checking efforts: A main reason misinformation spreads so rapidly is that there is so much of it that there aren’t enough eyes in the world to keep on top of it all. To create a strategy that will truly discredit false news and promote trustworthy content, Facebook is consulting academics, fact-checking experts, journalists, survey researchers and civil society organizations to build a system that works. They explain this process further in the video below:

- Expanding its third-party fact checking program: Now, The Associated Press will be “expanding its efforts by debunking false and misleading video misinformation and Spanish-language content appearing on Facebook in the US”.

- Limiting the reach of groups that repeatedly share misinformation: Groups that have consistently been flagged by fact-checkers for sharing misleading articles won’t have as much exposure in members’ News Feeds. This provides additional incentives for admins to police this content on their own, and also limits members from seeing the posts unless they seek them out in the groups itself.

- Introducing “Click Gap” into their News Feed Ranking System: “Click Gap” is a new feature that will help determine how posts show up in your feed. For SEO professionals, this is very similar to how Google evaluates inbound and outbound links directed to your website pages. Facebook states that “Click-Gap looks for domains with a disproportionate number of outbound Facebook clicks compared to their place in the web graph. This can be a sign that the domain is succeeding on News Feed in a way that doesn’t reflect the authority they’ve built outside it and is producing low-quality content. “

Informing the Facebook Community

In order to truly make an impact, it’s important to not only limit the spread of misinformation, but also educate the public to inform their own perspective.

The problem with social media, and the internet in general, is that if you see a headline or read a statistic, you’re inclined to believe it.

Because Facebook users can post whatever they want, it makes it difficult to separate what’s credible and what isn’t.

So, rather than simply take reactive measures by removing and limiting the exposure of misinformation non Facebook, it’s important for people to know how credible these sources are before they decide to believe them.

In the past year, Facebook has added features help users determine a source's credibility on their own. Now, they’ve announced expansions of of these tools that will provide even more transparency into these sources.

First, Facebook’s Context Button is getting some new features. This tool was first launched in April 2018, and provides people with more background information about the publishers and articles that appear in their News Feed so they can better decide what to read, trust and share.

Now, Facebook is adding Trust Indicators to the Context button. This was created by the Trust Project, which is made up of an association of news organizations dedicated to fairness, accuracy, and transparency in news reporting. Moving forward, Facebook's articles will be evaluated against the Trust Indicators eight core standards to evaluate credibility. Additionally, the Context Button will now apply to photos that have been reviewed by third-party checkers.

Facebook’s Page Quality tab will also be getting new information to help users better understand if the page is biased or trustworthy. This will be continually updated over time as new information is identified - but Facebook is starting off this month by updating the page’s status with respect to how often they post “clickbait” articles.

Final Thoughts

Whether it’s Facebook’s fault or not, it’s undeniable that the spread of misinformation has gotten out of hand.

It’s true that everyone is entitled to their own opinion, but it’s important that people draw their conclusions based on facts, not false information.

These updates have important implications for marketers as well. If we want prospects and customers to trust our information, we should be mindful of the groups we’re in, the articles we share, and the content we put out.

For example, if you post an article that has exaggerated statistics or information from a source that has the potential to be flagged by Facebook, it might reflect poorly on your brand even if you thought it was factual before you posted it.

These updates are a reminder that the industry is changing, and it’s likely that social media will soon have similar restrictions to what you’d see on cable television. This isn’t necessarily a bad thing, but does have the potential to disrupt the current way you use social media to market your business.

So, as always, stay on top of the changes, and be proactive in adapting your strategy.

Free Assessment: